Nvidia Wants To Put A GB300 Superchip On Your Desk With DGX Station, Spark PCs

GTC After a Hopper hiatus, Nvidia's DGX Station returns, now armed with an all-new desktop-tuned Grace-Blackwell Ultra Superchip capable of churning out 20 petaFLOPS of AI performance.

The system marks the first time Nvidia has updated its DGX Station lineup since the Ampere GPU generation. Its last DGX Station was an A100-based system with quad GPUs and a single AMD Epyc processor that was cooled by a custom refrigerant loop.

By comparison, Nvidia's new Blackwell-based systems are far simpler, powered by a single Blackwell Ultra GPU and, as its GB300 codename would suggest, are backed by a Grace CPU. In total, we're told the system will feature 784 GB of "unified memory between the CPU's LPDDR5x DRAM and the GPUs' HBM3e."

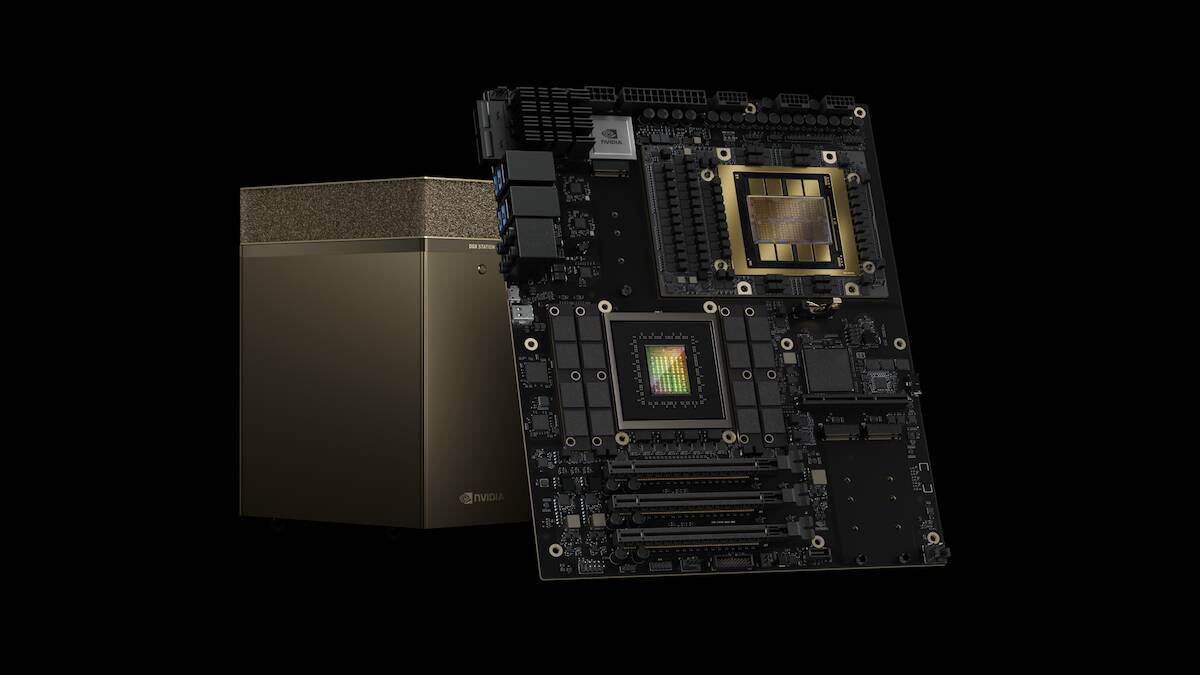

Here's a close-up view of the GB300 Desktop Superchip at the heart of the new DGX Station – click to enlarge

However, the system doesn't just cram the CPU and GPU onto the same mainboard. It also features an 800 Gbps ConnectX-8 NIC onboard in case you want to create a mini cluster of DGX Stations. In addition to its own gold-clad boxen, Nvidia says the GB300 Desktop board will also be available from OEMs including ASUS, BOXX, Dell, HP, Lambda, and Supermicro later this year.

Alongside the DGX Station, Nvidia has started taking reservations for its Project Digits box. Now called the DGX Spark, the $3,000 system, first teased at CES earlier this year, is powered by a GB10 Grace Blackwell system-on-chip. In terms of performance, the system boasts up to 1,000 trillion operations per second of AI compute and 128 GB of unified system memory in addition to integrated ConnectX-7 networking.

Nvidia's pint-sized Spark workstation promises 1,000 TOPS of AI performance and 128 GB of unified memory – click to enlarge

The L40S gets an RTX PRO replacement

Alongside its new workstations, Nvidia also unveiled today at its GPU Technology Conference (GTC) a series of new PCIe-based GPUs aimed at professional workstations and server applications. At the top of the stack are Nvidia's 96 GB RTX PRO 6000-series server and workstation chips.

The parts are designed to replace Nvidia's aging L40S and RTX 6000 Ada graphics cards and boast between 2.5x and 2.7x higher floating-point performance at 3,753 and 4,030 teraFLOPS respectively.

Of course, this doesn't tell the whole story, as the RTX PRO 6000 cards support the new FP4 datatype, while the Ada Lovelace GPUs do not. Normalized for FP8 performance, the gains aren't nearly so impressive, up roughly 28 percent on the server card and 38 percent for the workstation card.

With that said, FP4 support is good for more than just juicing Nvidia's FLOPS claims. It also has the benefit of shrinking model sizes from 1-2 bytes per parameter at FP8 or FP16 to just 4 bits, which means enterprises can cram larger, more capable models into fewer GPUs than before.

Today, massive 600 billion-plus parameter models from the likes of DeepSeek, OpenAI, Anthropic, or Google often end up stealing the limelight. However, these models are usually overkill for the kinds of retrieval-augmented generation or customer service chatbots enterprises are interested in deploying.

Because of this, models in the 24-70 billion parameter range have become quite popular as they're large enough to get the job done and are relatively easy to fine-tune, but are so big as to be impractical to deploy on more modest GPUs, like the L40S. Meta's Llama 3.3 70B, Mistral-Small V3, Alibaba's QwQ, and Google's Gemma 3 are just a handful of the mid-sized models aimed at enterprise deployments.

With 96 GB of capacity, Nvidia's RTX PRO 6000-series cards can now run models that previously would have required two L40Ses on a single card, or run models twice that size if you're willing to take advantage of 4-bit floating-point quants.

In addition to offering twice the capacity of their predecessors, the cards also offer between 66 and 88 percent higher memory bandwidth at 1.6-1.8 TBps for the server and workstation parts respectively.

Nvidia's new RTX Pro 6000 accelerators will be offered in passively cooled server and actively cooled workstation designs – click to enlarge

If you're wondering why there's such a large variance between the server and workstation card, we're told the main difference between the two is cooling. The workstation card is equipped with a larger active cooler reminiscent of the one found on the RTX 5090, while the server part features a more traditional passive heatsink.

- AI bubble? What AI bubble? Datacenter investors all in despite whispers of a pop

- Nvidia won the AI training race, but inference is still anyone's game

- Oracle yet to sign a Stargate contract or predict revenue from AI mega-build

- CoreWeave rides AI wave with IPO filing – but its fate hinges on Microsoft

Speaking of thermals, this higher floating-point performance and memory capacity does come at the cost of higher power draw. According to Nvidia, the workstation card is rated for up to 600 W of power draw, twice that of the RTX 6000 Ada it replaces.

The good news is that if you're looking for something more power frugal, Nvidia will also offer a Max-Q variant of the RTX PRO 6000 aimed at high-end mobile workstations.

The mobile GPU promises the same 1.8 TBps of memory bandwidth, though it tops out at 24 GB and only manages 3.75 petaFLOPS of FP4 performance.

The RTX PRO 6000 Blackwell Workstation Edition will be available in April, with server versions arriving in May and mobile variants launching in June.

Nvidia also plans to release RTX PRO 5000, 4500, and 4000 across the workstation and mobile segments beginning later this summer. ®

From Chip War To Cloud War: The Next Frontier In Global Tech Competition

The global chip war, characterized by intense competition among nations and corporations for supremacy in semiconductor ... Read more

The High Stakes Of Tech Regulation: Security Risks And Market Dynamics

The influence of tech giants in the global economy continues to grow, raising crucial questions about how to balance sec... Read more

The Tyranny Of Instagram Interiors: Why It's Time To Break Free From Algorithm-Driven Aesthetics

Instagram has become a dominant force in shaping interior design trends, offering a seemingly endless stream of inspirat... Read more

The Data Crunch In AI: Strategies For Sustainability

Exploring solutions to the imminent exhaustion of internet data for AI training.As the artificial intelligence (AI) indu... Read more

Google Abandons Four-Year Effort To Remove Cookies From Chrome Browser

After four years of dedicated effort, Google has decided to abandon its plan to remove third-party cookies from its Chro... Read more

LinkedIn Embraces AI And Gamification To Drive User Engagement And Revenue

In an effort to tackle slowing revenue growth and enhance user engagement, LinkedIn is turning to artificial intelligenc... Read more