Nvidia's Vera Rubin CPU, GPU Roadmap Charts Course For Hot-hot-hot 600 KW Racks

GTC Nvidia's rack-scale compute architecture is about to get really hot.

On stage at GTC on Tuesday, CEO Jensen Huang teased the tech titan's next-generation of high-end datacenter GPUs and CPUs codenamed Vera, Rubin, and Rubin Ultra.

This is going to get a little complicated, so just bear in mind: Vera is a CPU architecture, Rubin is a GPU architecture, and Rubin Ultra is going to be a refreshed version of Rubin.

Rubin Ultra, Huang boasted, is designed so that 576 GPU dies can be crammed into a single rack consuming 600 kW of power. However, before those systems arrive in late 2027, we'll first be getting Vera CPU cores and Rubin GPUs.

Named after American astronomer Vera Rubin, known for her research into dark matter, Vera is Nvidia's first Arm-compatible CPU architecture since Grace was announced in 2021.

Nvidia's next CPU and GPU architectures are called Vera and Rubin, revealed on stage at GTC in San Jose, California, by Jensen Huang

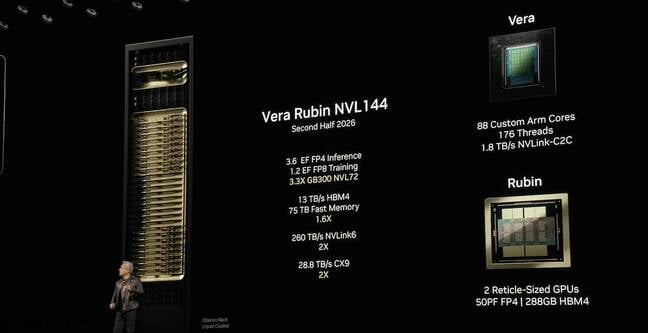

We're told the CPU will feature 88 custom-designed Arm Cores — bye, bye Neoverse — with SMT pushing the thread count to 176 per socket, when it arrives late next year. Like Grace, the chip will feature integrated NVLink chip-to-chip connectivity for interfacing with Nv's upcoming Rubin GPUs.

According to Huang, Rubin will borrow heavily from Blackwell's design architecture featuring two reticle-limited dies capable of up to 50 petaFLOPS at FP4 precision with 288 GB of HBM4 memory good for 13 TB/s of bandwidth.

Like Blackwell and Blackwell Ultra, the parts will be packaged as a Superchip and deployed in Nvidia's rackscale NVL144 chassis. But before you get too excited, Nvidia won't be cramming twice as many GPUs into a rack this time around. Instead, Huang has simply changed his mind about what constitutes a GPU. Where Blackwell's twin dies were a single logical chip, Rubin's are being counted as two GPUs on a package.

Compared to the GB300 NVL72 — which, again, has the same number of GPU dies — Huang boasted that its Vera-Rubin NVL144 will deliver 3.3x higher floating point performance topping 3.6 exaFLOPS of dense FP4 for inference and 1.2 exaFLOPS of FP8 compute for training.

The system will also feature Nvidia's 6th-gen NVLink switch fabric which will provide an aggregate 260 TB/s (1.8 TB/s per die) of interconnect bandwidth and will utilize its upcoming 1.6 Tbps ConnectX-9 NICs.

Also at GTC this week:

- Nvidia unveiled the Llama Nemotron family reasoning models designed to integrate into agentic AI systems. The first two, Nano and Super, are based on Meta's Llama 3.1 8B and 3.3 70B and have been pruned and fine tuned to achieve on-demand reasoning capabilities. Both models are available as Nvidia Inference Microservices (NIMs) or via Hugging Face.

- An AI-Q Blueprint, an open source framework for building complex agentic AI services that can ingest and process information from multiple sources or databases.

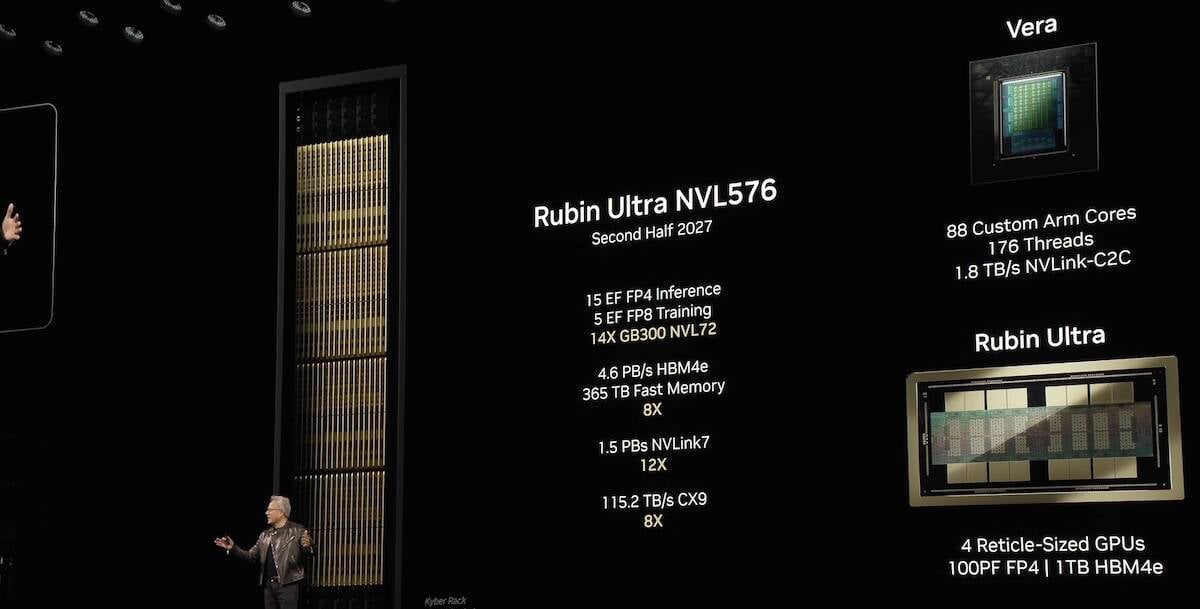

Where things really start to heat up is when Rubin Ultra lands in late 2027. That chip will double the number of GPU dies and HBM modules to four and 16 respectively.

By 2027 Nvidia CEO Jensen Huang expects racks to surge to 600 kW with the debut of the Rubin Ultra NVL576

Nvidia expects each Rubin Ultra package to top 100 petaFLOPS of FP4 performance and cram 1 terabyte of even faster HBM4e memory.

144 of these packages along with an unspecified number of Vera CPUs will be crammed into rack rated for 600 kW of power consumption and thermal output. In total, the chip giant expects the rack-scale system to deliver 15 exaFLOPS of FP4 inference perf and 5 exaFLOPS of FP8 for training.

To keep all those tensor cores fed, Nvidia plans to transition to faster NVLink7 interconnects for chip-to-chip communications, but will stick with 1.6 Tbps ConnectX-9 NIC for scale out communications.

However, the question remains how many existing datacenter facilities will be able to support such a dense configuration. The graphic shared on screen during Huang's keynote at GTC depicts a tall densely packed rack with systems that slot vertically into the cabinet — similar to some HPC clusters from Lenovo and HPE Cray.

- Nvidia wants to put a GB300 Superchip on your desk with DGX Station, Spark PCs

- Nvidia punts silicon photonic switches to keep GPUs fed with data

- We heard you like HBM – Nvidia's Blackwell Ultra GPUs have 288 GB of it

- DeepSeek-R1-beating perf in a 32B package? El Reg digs its claws into Alibaba's QwQ

Speaking of HPC vendors, super dense compute platforms aren't that uncommon. Last year we discussed Cray EX4000-series cabinets capable of supporting up to 300 kWs. However, these aren't exactly your typical 19-inch racks and these systems are usually designed around a datacenter shell specifically designed for this level of compute.

As such trying to cool 600 kW of compute capacity in what appears to be 19 or possibly a 21-inch OCP open rack form factor will almost certainly require a custom build.

Finally, Nvidia offered another peek at its datacenter roadmap which will see its next GPU architecture after Rubin and Rubin Ultra named after American theoretical physicist Richard Feynman and is slated for 2028. Surely, you're joking. ®

From Chip War To Cloud War: The Next Frontier In Global Tech Competition

The global chip war, characterized by intense competition among nations and corporations for supremacy in semiconductor ... Read more

The High Stakes Of Tech Regulation: Security Risks And Market Dynamics

The influence of tech giants in the global economy continues to grow, raising crucial questions about how to balance sec... Read more

The Tyranny Of Instagram Interiors: Why It's Time To Break Free From Algorithm-Driven Aesthetics

Instagram has become a dominant force in shaping interior design trends, offering a seemingly endless stream of inspirat... Read more

The Data Crunch In AI: Strategies For Sustainability

Exploring solutions to the imminent exhaustion of internet data for AI training.As the artificial intelligence (AI) indu... Read more

Google Abandons Four-Year Effort To Remove Cookies From Chrome Browser

After four years of dedicated effort, Google has decided to abandon its plan to remove third-party cookies from its Chro... Read more

LinkedIn Embraces AI And Gamification To Drive User Engagement And Revenue

In an effort to tackle slowing revenue growth and enhance user engagement, LinkedIn is turning to artificial intelligenc... Read more